He’s Dead, Jim

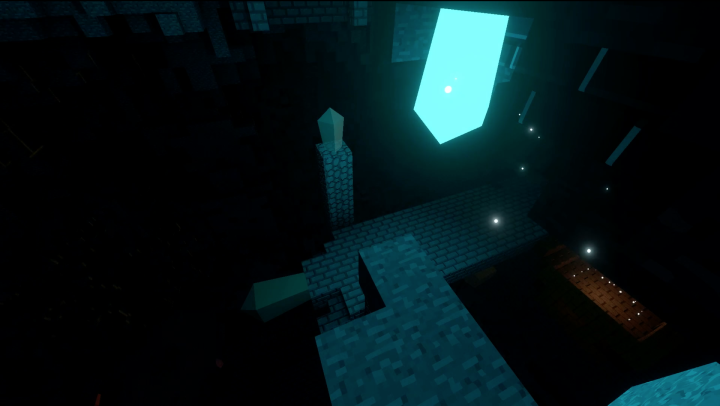

I am in fact not dead, and neither is this project. The past several months have been busy with external issues, virtual reality experiments, and finally some proper work on Freedom of Motion that I will share some of with you now.

Voxels – A History

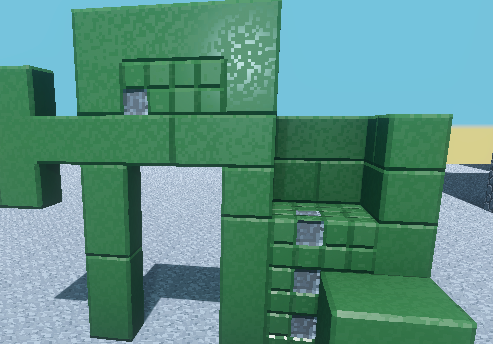

A big change that took up the bulk of my time was the overhaul of the voxel engine. My previous implementation relied on a naively-approached octree structure. Octrees were originally used with the notion that they would save on memory; after all, it makes sense to represent a big area of identical data as a single object. While this system clearly functioned, it had a number of limitations and drawbacks.

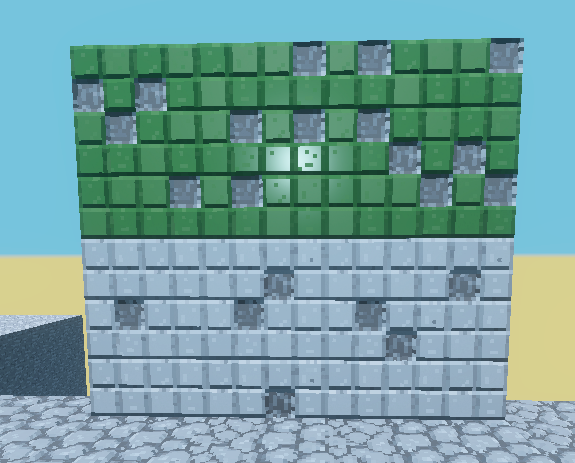

Meshing performance was quite poor. FoM uses 16x16x16 meter chunks with a minimum voxel size of .5 meters. Using a dry load from the starting area of the map in Alpha 6, the fastest meshing time was around 4ms and the average time was 22ms. However, the slowest chunks were built in 938ms. While meshing was threaded and didn’t affect the frame rate, this kind of performance spread was a major red flag.

Memory usage was also poor to abysmal. A uniform chunk may have had the relatively light footprint of a single volume, but this structure did not scale well. A single octree object used three doubles for positioning, a float for size, a 16 bit unsigned integer for material type, a 16 bit uint for form type and two additional bytes for the planned but unused features of per-side connection and smoothness values, all adding up to a whopping 240 bits per unique volume of voxels. In a worst-case scenario with the octree split all the way down, a single chunk would take up over 1.2 megabytes of memory.

Clearly, something had to be done.

The New Hotness

Memory and storage in the new voxel system is back-to-basics. Chunks represent voxels in a simple flat array. A single voxel now uses 32 bits: a 16bit material ID and a 16bit form data field for storing additional information. More on that later. Chunks are now stored using a 64bit positional id as defined in VoxelFarm’s WorldOctree documentation. While the actual implementation of the Chunk also contains dirty flags and C# edit events, as well as any entity data, the voxel-specific memory usage now uses a consistent 131kB of memory.

Like the old engine, chunks are stored in 8x8x8 chunk region files on disk. When writing to disk, identical series’ of voxels are run-length-encoded; a chunk containing uniform voxel data takes up only 102bits. While the use of a 16bit uint for length encoding does mean that the potential worst-case-scenario has a 50 percent overhead compared to just the raw data output, I expect the real-world savings of this system to be substantial. Another significant addition to the engine is that loading regions into memory (as well as generating chunks) is threaded. This was a significant source of per-frame lag in Alpha 6 when first loading a level and should now be alleviated.

The improvement from the previous engine is clear:

|

Min Chunk Size |

Max Chunk Size |

| Original |

240b |

1.25mB |

| New |

112b |

131kB |

| % Reduction |

53% |

89% |

Even the potential waste from extra run-length-encoding information in especially diverse chunks is well below the maximum of the old system.

Meshing performance was slightly more difficult to test. Every part of voxel engine has been completely rewritten, and given that none of the previous levels we built are going to actually be used in the final game, I decided that spending the not-insignificant amount of effort required to build a migration system for that old data was not a good use of time. Unfortunate, but we move forward. However, performance was tested in a new scene, and I think the numbers speak for themselves:

|

Min Time |

Max Time |

Average |

| Original |

3.74ms |

913.16ms |

17.81ms |

| New |

1.44ms |

17.36ms |

2.6ms |

| % Reduction |

61.6% |

98% |

85% |

I think losing a fiew exploratory levels is worth it for this level of performance gain.

Why?

There are a number of elements at play here. First of all, using less memory is going to make something faster, as the CPU simply has to chew through less data. This explains the worst-case scenario easily, but we are still seeing dramatic performance increases even in empty chunks. Surely, since we are now looping through a 32,768 length array even when a chunk has no information in it, the process should be slower? It’s not, and it’s because all of the data we need is right next to each other. Voxels are structs, not objects like octrees were. That means that not only do we not have to dive into the heap to get at an object (slow), we don’t have to dance all over memory as we move through the octrees.

The previous octree implementation also did not scale very well when it comes to large worlds, as a new parent would have to be created every time a new chunk outside of the old structure was required. In the new system, chunks are stored in a simple dictionary keyed by their 64bit ChunkID, making adding a new chunk or hopping to a specific one a very simple operation.

Further Improvement

There are still some hot-spots in the engine that must be addressed. For example, I am currently using Unity’s mesh collider for collision. Regenerating this when a chunk changes is quite slow (up to 30ms all by itself from current testing) and cannot be threaded. Additionally, while the overall framework for lower level-of-detail meshes at far distances is there, it currently only functions with procedurally generated chunks. Sub-sampling of real data needs to be implemented and there are issues with seams between levels of detail that need to be resolved before that feature is fully ready for prime time.

Next Time

No new alpha build yet. That will occur when I have implemented an actual gameplay loop (gasp!).

I’ve said it before, and I’ll say it again: I want to increase the pace of updates and posts. An eight-month gap says that I’m quite bad at following through on this; however, I have new features to share and new tools to show off, and I will do so over the next few weeks. Stay tuned.